Sufficient Reasons

Let $f$ be a Boolean function represented by a decision tree $T$, $x$ be an instance and $p$ be the prediction of $T$ on $x$ ($f(x) = p$), a sufficient reason for $x$ is a term of the binary representation of the instance that is a prime implicant of $f$ that covers $x$.

In other words, a sufficient reason for an instance $x$ given a class described by a Boolean function $f$ is a subset $t$ of the characteristics of $x$ that is minimal w.r.t. set inclusion and such that any instance $x’$ sharing this set $t$ of characteristics is classified by $f$ as $x$ is.

| <Explainer Object>.sufficient_reason(*, n=1, time_limit=None): |

|---|

| This method creates a CNF formula associated with the decision tree and solves it to find sufficient reasons. To achieve it, several calls to a SAT solver (Glucose) are performed and the result of each call is a sufficient reason. The method prevents finding the same sufficient reason twice or more by adding clauses (called blocking clauses) between each invocation. Returns n sufficient reasons of the current instance in a Tuple (when n is set to 1, does not return a Tuple but just the reason). Supports the excluded features. The reasons are in the form of binary variables, you must use the to_features method if you want to obtain a representation based on the features represented at start. |

n Integer Explainer.ALL: The desired number of sufficient reasons. Sets this to explainer.ALL to request all reasons. Default value is 1. |

time_limit Integer: The time limit of the method in seconds. Sets this to None to give this process an infinite amount of time. Default value is None. |

A sufficient reason is minimal w.r.t. set inclusion, i.e. there is no subset of this reason which is also a sufficient reason. A minimal sufficient reason for $x$ is a sufficient reason for $x$ that contains a minimal number of literals. In other words, a minimal sufficient reason has a minimal size.

| <ExplainerDT Object>.minimal_sufficient_reason(*, n=1, time_limit=None): |

|---|

| This method considers a CNF formula representing the decision tree as hard clauses and add binary variables representing the instance as unary soft clauses with weights equal to 1. Several calls to a MAXSAT solver (OPENWBO) are performed and the result of each call is a minimal sufficient reason. The minimal sufficient reasons are those with the lowest scores (i.e. the sum of weights). Thus, the algorithm stops when a sufficient non-minimal reason is found (i.e. when a higher score is found). Moreover, the method prevents finding the same sufficient reason twice or more by adding clauses (called blocking clauses) between each invocation. Returns n minimal sufficient reasons of the current instance in a Tuple (when n is set to 1, does not return a Tuple but just the reason). Supports the excluded features. The reasons are in the form of binary variables, you must use the to_features method if you want to obtain a representation based on the features considered at start. |

n Integer explainer.ALL: The desired number of sufficient reasons. Set this to Explainer.ALL to request all reasons. Default value is 1. |

time_limit Integer: The time limit of the method in seconds. Sets this to None to give this process an infinite amount of time. Default value is None. |

One can also compute prefered sufficient reasons. Indeed, the user may prefer reason containing some features and can provide weights in order to discriminate some features. Please take a look to the Preferences page for more information.

| <ExplainerRF Object>.prefered_sufficient_reason(*, method, n=1, time_limit=None, weights=None, features_partition=None): |

|---|

This method considers a CNF formula representing the decision tree as hard clauses and add binary variables representing the instance as unary soft clauses with weights equal to different values depending the method used. If the method is PreferredReasonMethod.WEIGHTS then weights are given by the parameter weights, otherwise this parameter is useless. If the method is PreferredReasonMethod.INCLUSION_PREFERRED then the partition of features is given by the parameter features_partition, otherwise this parameter is useless. Several calls to a MAXSAT solver (OPENWBO) are performed and the result of each call is a preferred sufficient reason. The method prevents finding the same reason twice or more by adding clauses (called blocking clauses) between each invocation.Returns n preferred majoritary reason of the current instance in a Tuple (when n is set to 1, does not return a Tuple but just the reason). Supports the excluded features. The reasons are in the form of binary variables, you must use the to_features method if you want to obtain a representation based on the features represented at start. |

method PreferredReasonMethod.WEIGHTS PreferredReasonMethods.SHAPLEY PreferredReasonMethod.FEATURE_IMPORTANCE PreferredReasonMethod.WORD_FREQUENCY: The method used to discriminate features. |

time_limit Integer None: The time limit of the method in seconds. Sets this to None to give this process an infinite amount of time. Default value is None. |

n Integer: The number of majoritary reasons computed. Currently n=1 or n=Exmplainer.ALL is only supported. Default value is 1. |

weights List: The weights (list of floats, one per feature, used to discriminate features. Useful when method is PreferredReasonMethod.WEIGHTS. Default value is None. |

features_partition List of List: The partition of features. The first elements are preferred to the second ones, and so on. Usefull when method is PreferredReasonMethod.INCLUSION_PREFERRED. Default value is None. |

The PyXAI library provides a way to check that a reason is sufficient:

| <Explainer Object>.is_sufficient_reason(reason, *, n_samples=50): |

|---|

This method checks if a reason is sufficient. To do that, it calls first the method is_reason to check whether n_samples complete binary representations from this reason (randomly generated) lead to the correct prediction. Secondly, it verifies the minimality of the reason w.r.t. set inclusion. To do that, it deletes a literal of the reason, tests with is_reason that this new binary representation is not a reason and puts back this literal. The method repeats this operation on every literal of the reason. Because this method is based on a given number of samples and random generation, it is not deterministic (i.e. it is not 100% sure to provide the right answer). It returns False if it is sure that the input reason is a sufficient one, True if it is a sufficient reason based on the n_samples tests and None if the answer is not sure. |

reason List of Integer: The reason to be checked. |

n_samples Integer: The number of samples to be considered, i.e., the number of complete binary representations to be generated randomly from the reason. Default value is 50. |

Reminder that the literals of a binary representation represent the conditions “<id_feature> <operator> <threshold> ?” (such as “$x_4 \ge 0.5$ ?”) implied by an instance. A literal $l$ of a binary representation is a necessary feature for $x$ if and only if $l$ belongs to every sufficient reason $t$ for $x$. In contrast, a literal $l$ of a binary representation is a relevant feature for $x$ if and only if $l$ belongs to at least one sufficient reason $t$ for $x$. PyXAI provides methods to compute them:

| <ExplainerDT Object>.necessary_literals(): |

|---|

Returns a List containing necessary literals, i.e. literals belonging to every sufficient reason. |

| <ExplainerDT Object>.relevant_literals(): |

|---|

Returns a List containing relevant literals, i.e. literals belonging to at least one sufficient reason. |

For a given instance, it can be interesting to compute the number of sufficient reasons or the number of sufficient reasons per literal of the binary representation. PyXAI allows this:

| <ExplainerDT Object>.n_sufficient_reasons(*, time_limit=None): |

|---|

Returns the number of sufficient reasons . This method uses the D4 compiler to count the models of a CNF formula representing the tree. Supports the excluded features. Returns None if time_limit was reached. |

time_limit Integer None: The time limit of the method in seconds. Sets this to None to give this process an infinite amount of time. Default value is None. |

| <ExplainerDT Object>.n_sufficient_reasons_per_literal(*, time_limit=None): |

|---|

Returns the number of sufficient reasons per literal in the form of a python dictionary where the keys are the literals and the values the numbers of sufficient reasons. Returns an empty dictionnary if time_limit is reached. This method uses the D4 compiler to count the models of a CNF formula representing the decision tree. Supports the excluded features. The results are in the form of binary variables, you must use the to_features method if you want to obtain a representatation based on features represented at start. |

time_limit Integer None: The time limit of the method in seconds. Sets this to None to give this process an infinite amount of time. Default value is None. |

More information about sufficient reasons and minimal sufficient reasons can be found in the paper On the Explanatory Power of Decision Trees. The basic methods (initialize, set_instance, to_features, is_reason, …) of the Explainer module used in the next examples are described in the Explainer Principles page.

Example from a Hand-Crafted Tree

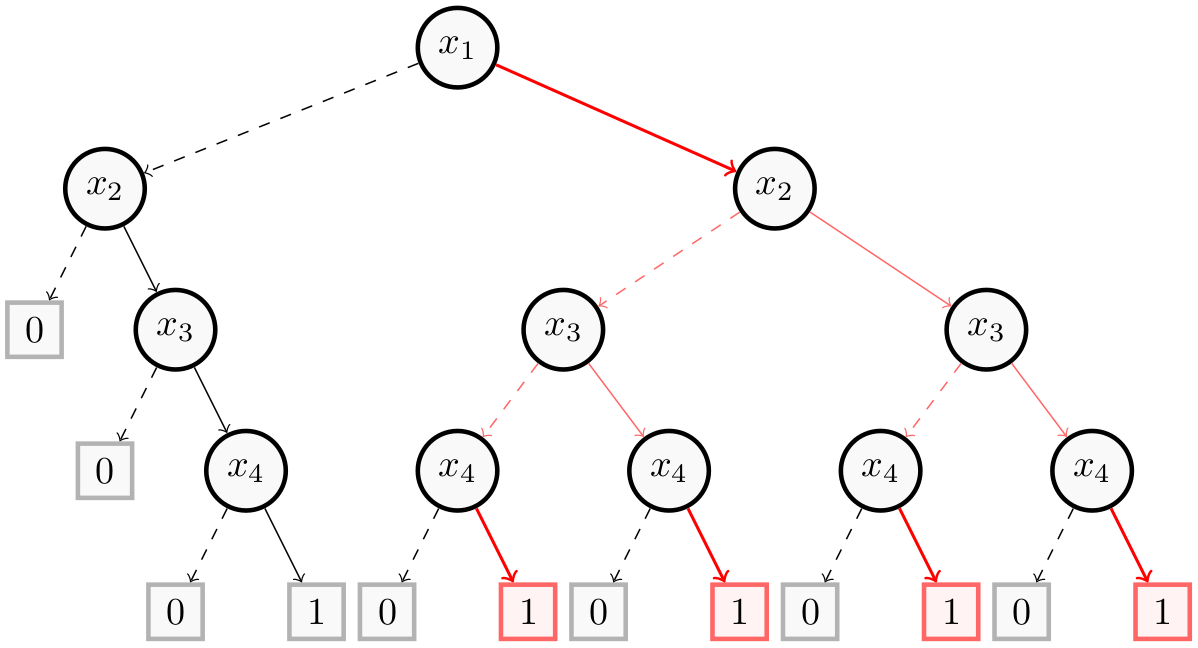

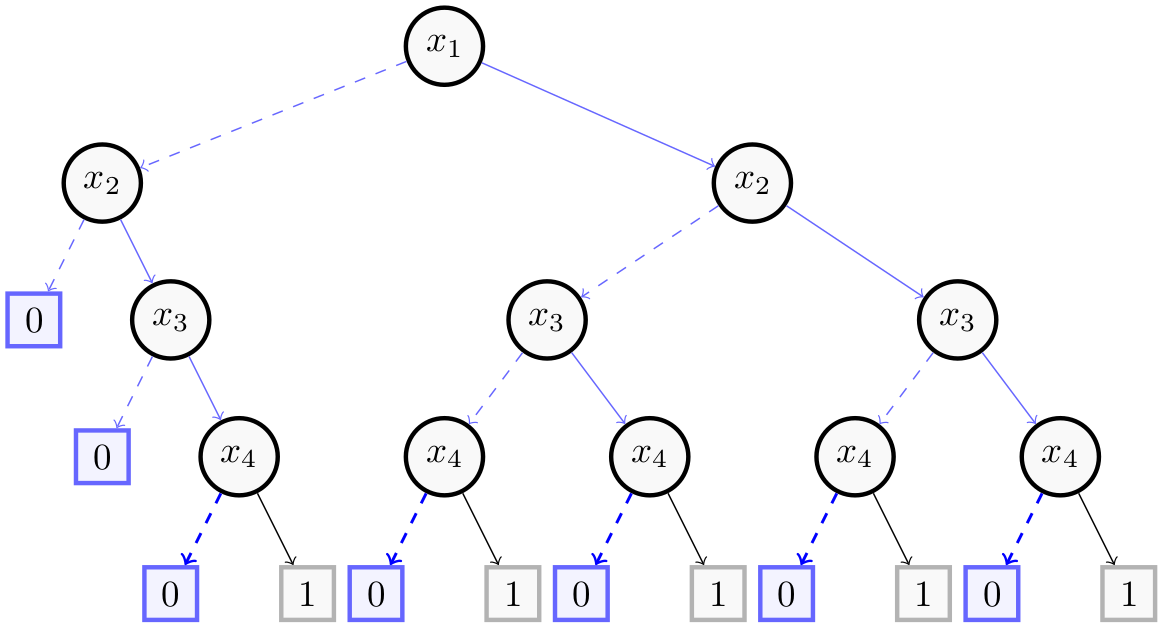

For this example, we take the Decision Tree of the Building Models page consisting of $4$ binary features ($x_1$, $x_2$, $x_3$ and $x_4$).

The following figure shows in red and bold a minimal sufficient reason $(x_1, x_4)$ for the instance $(1,1,1,1)$.

The next figure gives in blue and bold a minimal sufficient reason $(-x_4)$ for the instance $(0,0,0,0)$.

We now show how to get those reasons with PyXAI. We start by building the decision tree:

from pyxai import Builder, Explainer

node_x4_1 = Builder.DecisionNode(4, left=0, right=1)

node_x4_2 = Builder.DecisionNode(4, left=0, right=1)

node_x4_3 = Builder.DecisionNode(4, left=0, right=1)

node_x4_4 = Builder.DecisionNode(4, left=0, right=1)

node_x4_5 = Builder.DecisionNode(4, left=0, right=1)

node_x3_1 = Builder.DecisionNode(3, left=0, right=node_x4_1)

node_x3_2 = Builder.DecisionNode(3, left=node_x4_2, right=node_x4_3)

node_x3_3 = Builder.DecisionNode(3, left=node_x4_4, right=node_x4_5)

node_x2_1 = Builder.DecisionNode(2, left=0, right=node_x3_1)

node_x2_2 = Builder.DecisionNode(2, left=node_x3_2, right=node_x3_3)

node_x1_1 = Builder.DecisionNode(1, left=node_x2_1, right=node_x2_2)

tree = Builder.DecisionTree(4, node_x1_1, force_features_equal_to_binaries=True)

And we compute the sufficient reasons for each of these two instances:

explainer = Explainer.initialize(tree)

explainer.set_instance((1,1,1,1))

sufficient_reasons = explainer.sufficient_reason(n=Explainer.ALL)

print("sufficient_reasons:", sufficient_reasons)

assert sufficient_reasons == ((1, 4), (2, 3, 4)), "The sufficient reasons are not good !"

for sufficient in sufficient_reasons:

print("to_features:", explainer.to_features(sufficient))

assert explainer.is_sufficient_reason(sufficient), "This is have to be a sufficient reason !"

minimals = explainer.minimal_sufficient_reason()

print("minimal_sufficient_reason:", minimals)

assert minimals == (1, 4), "The minimal sufficient reasons are not good !"

print("-------------------------------")

explainer.set_instance((0,0,0,0))

sufficient_reasons = explainer.sufficient_reason(n=Explainer.ALL)

print("sufficient_reasons:", sufficient_reasons)

assert sufficient_reasons == ((-4,), (-1, -2), (-1, -3)), "The sufficient reasons are not good !"

for sufficient in sufficient_reasons:

print("to_features:", explainer.to_features(sufficient))

assert explainer.is_sufficient_reason(sufficient), "This is have to be a sufficient reason !"

minimals = explainer.minimal_sufficient_reason(n=1)

print("minimal_sufficient_reasons:", minimals)

assert minimals == (-4,), "The minimal sufficient reasons are not good !"

sufficient_reasons: ((1, 4), (2, 3, 4))

to_features: ('f1 >= 0.5', 'f4 >= 0.5')

to_features: ('f2 >= 0.5', 'f3 >= 0.5', 'f4 >= 0.5')

minimal_sufficient_reason: (1, 4)

-------------------------------

sufficient_reasons: ((-4,), (-1, -2), (-1, -3))

to_features: ('f4 < 0.5',)

to_features: ('f1 < 0.5', 'f2 < 0.5')

to_features: ('f1 < 0.5', 'f3 < 0.5')

minimal_sufficient_reasons: (-4,)

Example from a Real Dataset

For this example, we take the compas.csv dataset. We create a model using the hold-out approach (by default, the test size is set to 30%) and select a well-classified instance.

from pyxai import Learning, Explainer

learner = Learning.Scikitlearn("../../../dataset/compas.csv", learner_type=Learning.CLASSIFICATION)

model = learner.evaluate(method=Learning.HOLD_OUT, output=Learning.DT)

instance, prediction = learner.get_instances(model, n=1, correct=True)

data:

Number_of_Priors score_factor Age_Above_FourtyFive

0 0 0 1 \

1 0 0 0

2 4 0 0

3 0 0 0

4 14 1 0

... ... ... ...

6167 0 1 0

6168 0 0 0

6169 0 0 1

6170 3 0 0

6171 2 0 0

Age_Below_TwentyFive African_American Asian Hispanic

0 0 0 0 0 \

1 0 1 0 0

2 1 1 0 0

3 0 0 0 0

4 0 0 0 0

... ... ... ... ...

6167 1 1 0 0

6168 1 1 0 0

6169 0 0 0 0

6170 0 1 0 0

6171 1 0 0 1

Native_American Other Female Misdemeanor Two_yr_Recidivism

0 0 1 0 0 0

1 0 0 0 0 1

2 0 0 0 0 1

3 0 1 0 1 0

4 0 0 0 0 1

... ... ... ... ... ...

6167 0 0 0 0 0

6168 0 0 0 0 0

6169 0 1 0 0 0

6170 0 0 1 1 0

6171 0 0 1 0 1

[6172 rows x 12 columns]

-------------- Information ---------------

Dataset name: ../../../dataset/compas.csv

nFeatures (nAttributes, with the labels): 12

nInstances (nObservations): 6172

nLabels: 2

--------------- Evaluation ---------------

method: HoldOut

output: DT

learner_type: Classification

learner_options: {'max_depth': None, 'random_state': 0}

--------- Evaluation Information ---------

For the evaluation number 0:

metrics:

accuracy: 65.33477321814254

nTraining instances: 4320

nTest instances: 1852

--------------- Explainer ----------------

For the evaluation number 0:

**Decision Tree Model**

nFeatures: 11

nNodes: 539

nVariables: 46

--------------- Instances ----------------

number of instances selected: 1

----------------------------------------------

And we compute a sufficient reason for this instance:

explainer = Explainer.initialize(model, instance)

print("instance:", instance)

print("prediction:", prediction)

print()

sufficient_reason = explainer.sufficient_reason(n=1)

#for s in sufficient_reasons:

print("\nsufficient reason:", len(sufficient_reason))

print("to features", explainer.to_features(sufficient_reason))

print("is sufficient_reason (for max 50 checks): ", explainer.is_sufficient_reason(sufficient_reason, n_samples=50))

print()

minimal = explainer.minimal_sufficient_reason()

print("\nminimal:", len(minimal))

print("is sufficient_reason (for max 50 checks): ", explainer.is_sufficient_reason(sufficient_reason, n_samples=50))

print()

print("\nnecessary literals: ", explainer.necessary_literals())

print("\nnecessary literals features: ", explainer.to_features(explainer.necessary_literals()))

print("\nrelevant literals: ", explainer.relevant_literals())

print()

print("n sufficient reasons:", explainer.n_sufficient_reasons())

sufficient_reasons_per_attribute = explainer.n_sufficient_reasons_per_attribute()

print("\nsufficient_reasons_per_attribute:", sufficient_reasons_per_attribute)

print("\nsufficient_reasons_per_attribute features:", explainer.to_features(sufficient_reasons_per_attribute, details=True))

instance: [0 0 1 0 0 0 0 0 1 0 0]

prediction: 0

sufficient reason: 4

to features ('Number_of_Priors <= 0.5', 'score_factor <= 0.5', 'Age_Below_TwentyFive <= 0.5')

is sufficient_reason (for max 50 checks): True

minimal: 4

is sufficient_reason (for max 50 checks): True

necessary literals: [-3, -1]

necessary literals features: ('score_factor <= 0.5', 'Age_Below_TwentyFive <= 0.5')

relevant literals: [-4, -7, -2, -9, -2, -14, -4, 5, -11, -4, 5, -13, -2, 5, -15, -2, 5, -8, -2, -11, -15, -2, -12, -17, -2, -7, -17, -2, 6, -17, -2, -11, -17, -4, 6, -9, -12, -4, 6, -12, -13, -2, 5, -12, -18, -2, -8, -17, -19, -4, 6, -9, -11, -13]

n sufficient reasons: 25

sufficient_reasons_per_attribute: {-3: 25, -1: 25, -4: 6, -7: 20, -2: 9, -9: 19, -14: 16, 5: 15, -11: 14, -13: 10, -15: 10, -8: 7, -12: 12, -17: 12, 6: 8, -18: 2, -19: 1}

sufficient_reasons_per_attribute features: OrderedDict([('Number_of_Priors', [{'id': 1, 'name': 'Number_of_Priors', 'operator': <OperatorCondition.GT: 52>, 'sign': True, 'operator_sign_considered': <OperatorCondition.LE: 51>, 'threshold': 0.5, 'weight': 6, 'theory': None, 'string': 'Number_of_Priors <= 0.5'}]), ('score_factor', [{'id': 2, 'name': 'score_factor', 'operator': <OperatorCondition.GT: 52>, 'sign': True, 'operator_sign_considered': <OperatorCondition.LE: 51>, 'threshold': 0.5, 'weight': 25, 'theory': None, 'string': 'score_factor <= 0.5'}]), ('Age_Above_FourtyFive', [{'id': 3, 'name': 'Age_Above_FourtyFive', 'operator': <OperatorCondition.GT: 52>, 'sign': False, 'operator_sign_considered': <OperatorCondition.GT: 52>, 'threshold': 0.5, 'weight': 15, 'theory': None, 'string': 'Age_Above_FourtyFive > 0.5'}]), ('Age_Below_TwentyFive', [{'id': 4, 'name': 'Age_Below_TwentyFive', 'operator': <OperatorCondition.GT: 52>, 'sign': True, 'operator_sign_considered': <OperatorCondition.LE: 51>, 'threshold': 0.5, 'weight': 25, 'theory': None, 'string': 'Age_Below_TwentyFive <= 0.5'}]), ('African_American', [{'id': 5, 'name': 'African_American', 'operator': <OperatorCondition.GT: 52>, 'sign': True, 'operator_sign_considered': <OperatorCondition.LE: 51>, 'threshold': 0.5, 'weight': 7, 'theory': None, 'string': 'African_American <= 0.5'}]), ('Asian', [{'id': 6, 'name': 'Asian', 'operator': <OperatorCondition.GT: 52>, 'sign': True, 'operator_sign_considered': <OperatorCondition.LE: 51>, 'threshold': 0.5, 'weight': 20, 'theory': None, 'string': 'Asian <= 0.5'}]), ('Hispanic', [{'id': 7, 'name': 'Hispanic', 'operator': <OperatorCondition.GT: 52>, 'sign': True, 'operator_sign_considered': <OperatorCondition.LE: 51>, 'threshold': 0.5, 'weight': 12, 'theory': None, 'string': 'Hispanic <= 0.5'}]), ('Other', [{'id': 9, 'name': 'Other', 'operator': <OperatorCondition.GT: 52>, 'sign': False, 'operator_sign_considered': <OperatorCondition.GT: 52>, 'threshold': 0.5, 'weight': 8, 'theory': None, 'string': 'Other > 0.5'}]), ('Female', [{'id': 10, 'name': 'Female', 'operator': <OperatorCondition.GT: 52>, 'sign': True, 'operator_sign_considered': <OperatorCondition.LE: 51>, 'threshold': 0.5, 'weight': 19, 'theory': None, 'string': 'Female <= 0.5'}]), ('Misdemeanor', [{'id': 11, 'name': 'Misdemeanor', 'operator': <OperatorCondition.GT: 52>, 'sign': True, 'operator_sign_considered': <OperatorCondition.LE: 51>, 'threshold': 0.5, 'weight': 14, 'theory': None, 'string': 'Misdemeanor <= 0.5'}])])

Other types of explanations are presented in the Explanations Computation page.