Direct Reason

Let $T$ be a decision tree and $x$ be an instance, the direct reason for $x$ is a term of the binary representation of the instance corresponding to the unique root-to-leaf path of $T$ that is compatible with $x$. Due to its simplicity, it is one of the easiest reason to calculate, but in general it is redundant. More information about the direct reason can be found in the paper On the Explanatory Power of Decision Trees.

| <Explainer Object>.direct_reason(): |

|---|

Returns the direct reason of the current instance. Returns None if this reason contains some excluded features. This reason is in the form of binary variables, you must use the to_features method if you want it in the form of features considered at start. |

The basic methods (initialize, set_instance, to_features, is_reason, …) of the Explainer module used in the next examples are described in the Explainer Principles page.

Example from a Hand-Crafted Tree

For this example, we take the Decision Tree of the Building Models page.

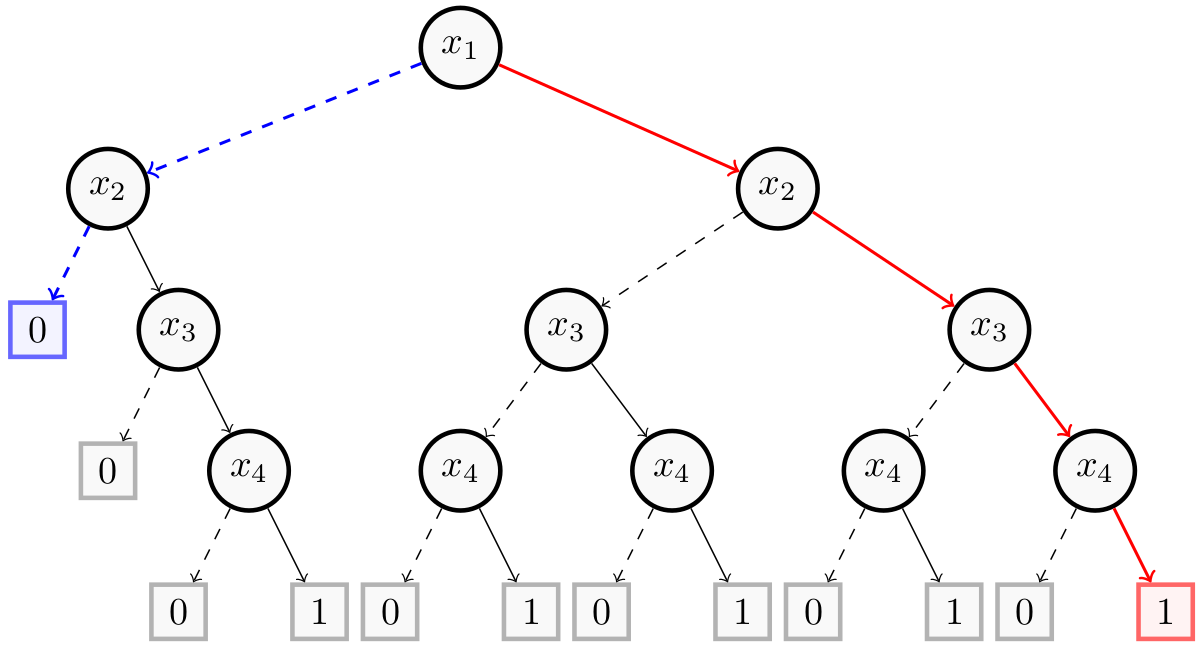

This figure represents a Decision Tree using $4$ binary features ($x_1$, $x_2$, $x_3$ and $x_4$). The direct reason for the instance $(1,1,1,1)$ is in red and the one for $(0,0,0,0)$ is in blue. Now, we show how to get them with PyXAI. We start by building the decision tree:

from pyxai import Builder, Explainer

node_x4_1 = Builder.DecisionNode(4, left=0, right=1)

node_x4_2 = Builder.DecisionNode(4, left=0, right=1)

node_x4_3 = Builder.DecisionNode(4, left=0, right=1)

node_x4_4 = Builder.DecisionNode(4, left=0, right=1)

node_x4_5 = Builder.DecisionNode(4, left=0, right=1)

node_x3_1 = Builder.DecisionNode(3, left=0, right=node_x4_1)

node_x3_2 = Builder.DecisionNode(3, left=node_x4_2, right=node_x4_3)

node_x3_3 = Builder.DecisionNode(3, left=node_x4_4, right=node_x4_5)

node_x2_1 = Builder.DecisionNode(2, left=0, right=node_x3_1)

node_x2_2 = Builder.DecisionNode(2, left=node_x3_2, right=node_x3_3)

node_x1_1 = Builder.DecisionNode(1, left=node_x2_1, right=node_x2_2)

tree = Builder.DecisionTree(4, node_x1_1, force_features_equal_to_binaries=True)

And we compute the direct reasons for these two instances:

explainer = Explainer.initialize(tree)

explainer.set_instance((1,1,1,1))

direct = explainer.direct_reason()

print("instance: (1,1,1,1)")

print("binary representation:", explainer.binary_representation)

print("target_prediction:", explainer.target_prediction)

print("direct:", direct)

print("to_features:", explainer.to_features(direct))

print("------------------------------------------------")

explainer.set_instance((0,0,0,0))

direct = explainer.direct_reason()

print("instance: (0,0,0,0)")

print("binary representation:", explainer.binary_representation)

print("target_prediction:", explainer.target_prediction)

print("direct:", direct)

print("to_features:", explainer.to_features(direct))

instance: (1,1,1,1)

binary representation: (1, 2, 3, 4)

target_prediction: 1

direct: (1, 2, 3, 4)

to_features: ('f1 >= 0.5', 'f2 >= 0.5', 'f3 >= 0.5', 'f4 >= 0.5')

------------------------------------------------

instance: (0,0,0,0)

binary representation: (-1, -2, -3, -4)

target_prediction: 0

direct: (-1, -2)

to_features: ('f1 < 0.5', 'f2 < 0.5')

Example from a Real Dataset

For this example, we take the compas dataset. We create a model using the hold-out approach (by default, the test size is set to 30%) and select a well-classified instance.

from pyxai import Learning, Explainer

learner = Learning.Scikitlearn("../../../dataset/compas.csv", learner_type=Learning.CLASSIFICATION)

model = learner.evaluate(method=Learning.HOLD_OUT, output=Learning.DT)

instance, prediction = learner.get_instances(model, n=1, correct=True)

data:

Number_of_Priors score_factor Age_Above_FourtyFive

0 0 0 1 \

1 0 0 0

2 4 0 0

3 0 0 0

4 14 1 0

... ... ... ...

6167 0 1 0

6168 0 0 0

6169 0 0 1

6170 3 0 0

6171 2 0 0

Age_Below_TwentyFive African_American Asian Hispanic

0 0 0 0 0 \

1 0 1 0 0

2 1 1 0 0

3 0 0 0 0

4 0 0 0 0

... ... ... ... ...

6167 1 1 0 0

6168 1 1 0 0

6169 0 0 0 0

6170 0 1 0 0

6171 1 0 0 1

Native_American Other Female Misdemeanor Two_yr_Recidivism

0 0 1 0 0 0

1 0 0 0 0 1

2 0 0 0 0 1

3 0 1 0 1 0

4 0 0 0 0 1

... ... ... ... ... ...

6167 0 0 0 0 0

6168 0 0 0 0 0

6169 0 1 0 0 0

6170 0 0 1 1 0

6171 0 0 1 0 1

[6172 rows x 12 columns]

-------------- Information ---------------

Dataset name: ../../../dataset/compas.csv

nFeatures (nAttributes, with the labels): 12

nInstances (nObservations): 6172

nLabels: 2

--------------- Evaluation ---------------

method: HoldOut

output: DT

learner_type: Classification

learner_options: {'max_depth': None, 'random_state': 0}

--------- Evaluation Information ---------

For the evaluation number 0:

metrics:

accuracy: 65.33477321814254

nTraining instances: 4320

nTest instances: 1852

--------------- Explainer ----------------

For the evaluation number 0:

**Decision Tree Model**

nFeatures: 11

nNodes: 539

nVariables: 46

--------------- Instances ----------------

number of instances selected: 1

----------------------------------------------

Finally, we compute the direct reason for this instance:

explainer = Explainer.initialize(model, instance)

print("instance:", instance)

print("prediction:", prediction)

print()

direct_reason = explainer.direct_reason()

print("len binary representation:", len(explainer.binary_representation))

print("len direct:", len(direct_reason))

print("is_reason:", explainer.is_reason(direct_reason))

print("to_features:", explainer.to_features(direct_reason))

instance: [0 0 1 0 0 0 0 0 1 0 0]

prediction: 0

len binary representation: 46

len direct: 9

is_reason: True

to_features: ('Number_of_Priors <= 0.5', 'score_factor <= 0.5', 'Age_Above_FourtyFive > 0.5', 'Age_Below_TwentyFive <= 0.5', 'African_American <= 0.5', 'Other > 0.5', 'Female <= 0.5', 'Misdemeanor <= 0.5')

We can remark that this direct reason contains 9 binary variables out of 46 variables in the binary representation. This reason explains why the model predicts $0$ for this instance. But this is probably not the most compact abductive explanation for the instance, we invite you to take a look at the other types of reasons presented on the Decision Tree Explanations page.