Contrastive Reasons

Unlike abductive explanations that explain why an instance $x$ is classified as belonging to a given class, contrastive explanations explain why $x$ has not been classified by the ML model as expected.

Let $f$ be a Boolean function represented by a decision tree $T$, $x$ be an instance and $p$ the prediction of $T$ on $x$ ($f(x) = p$), a contrastive reason for $x$ is a term $t$ such that:

- $t \subseteq t_{x}$, $t_{x} \setminus t$ is not an implicant of $f$ ;

- for every $\ell \in t$, $t \setminus {\ell}$ does not satisfy this previous condition (that is, $t$ is minimal w.r.t. set inclusion).

Formally, a contrastive reason for $x$ is a subset $t$ of the characteristics of $x$ that is minimal w.r.t. set inclusion among those such that at least one instance $x’$ that coincides with $x$ except on the characteristics from $t$ is not classified by the decision tree as $x$ is. In a simple way, a contrastive reason represents the adjustments in the features that we have to do to change the prediction for an instance.

A contrastive reason is minimal w.r.t. set inclusion, i.e., there is no subset of this reason which is also a contrastive reason. A minimal contrastive reason for $x$ is a contrastive reason for $x$ that contains a minimal number of literals. In other words, a minimal contrastive reason has a minimal size.

More information about contrastive reasons can be found in the paper On the Explanatory Power of Decision Trees.

| <ExplainerDT Object>.contrastive_reason(*, n=1): |

|---|

Extracts the contrastive reasons for an instance by taking the literals of the binary representation in the CNF formula associated with the decision tree and the given instance. Returns n sufficient reasons sorted by increasing size for the current instance in a Tuple (when n is set to 1, does not return a Tuple but just the reason). Supports the excluded features. The reasons are in the form of binary variables, you must use the to_features method if you want a representation based on the features considered at start. |

n Integer explainer.ALL: The desired number of contrastive reasons. Sets this to Explainer.ALL to request all reasons. Default value is 1. |

As the

contrastive_reasonreturns the contrastive reasons in a ascending order according to their sizes, the minimal contrastive reasons are the first ones in the returned tuple.

The PyXAI library provides a way to check that a reason is contrastive:

| <Explainer Object>.is_contrastive_reason(reason): |

|---|

Replaces in the binary representation of the instance each literal of the reason with its opposite and checks that the result does not predict the same class as the initial instance. Returns True if the reason is contrastive, False otherwise. |

reason List of Integer: The reason to check. |

The basic methods (initialize, set_instance, to_features, is_reason, …) of the Explainer module used in the next examples are described in the Explainer Principles page.

Example from a Hand-Crafted Tree

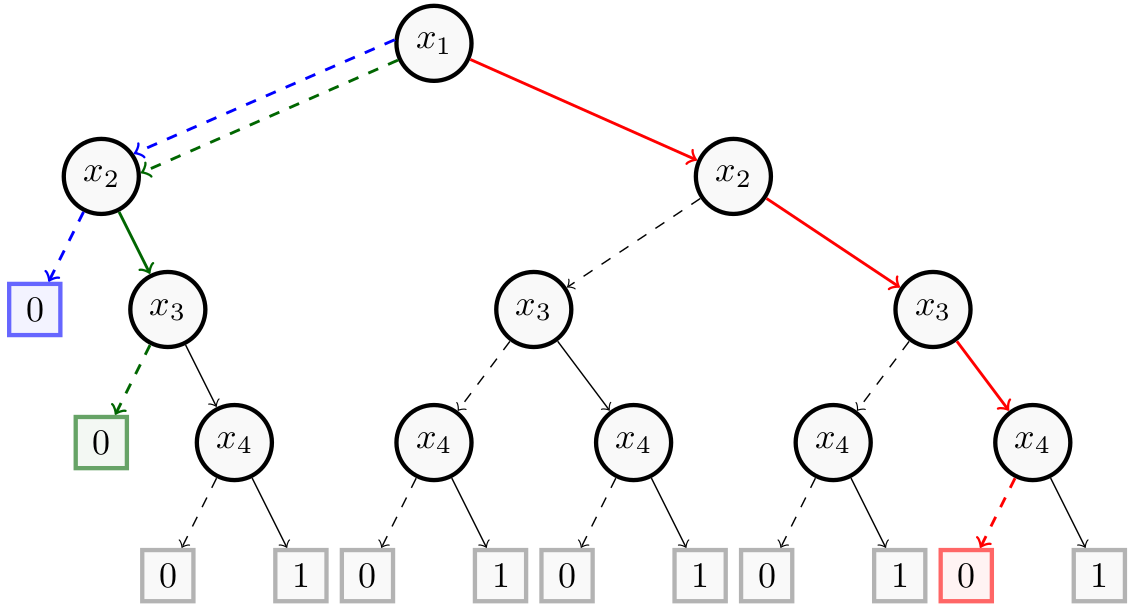

For this example, we take the Decision Tree of the Building Models page consisting of $4$ binary features ($x_1$, $x_2$, $x_3$ and $x_4$).

The following figure shows the new instances (respectively, $(1,1,1,0)$, $(0,0,1,1)$ and $(0,1,0,1)$) created from the contrastive reasons $(x_4)$ in red, $(x_1, x_2)$ in blue and $(x_1, x_3)$ in green of the instance $(1,1,1,1)$. Thus, the instance $(1,1,1,0)$ (resp. $(0,0,1,1)$ and $(0,1,0,1)$) that differs with $x$ only on $x_4$ (resp. $(x_1, x_2)$ and $(x_1, x_3)$) is not classified by $T$ as $x$ is ($(1,1,1,0)$, $(0,0,1,1)$ and $(0,1,0,1)$ are classified as negative instances while $(1,1,1,1)$ is classified as a positive instance).

Now, we show how to get them with PyXAI. We start by building the decision tree:

from pyxai import Builder, Explainer

node_x4_1 = Builder.DecisionNode(4, left=0, right=1)

node_x4_2 = Builder.DecisionNode(4, left=0, right=1)

node_x4_3 = Builder.DecisionNode(4, left=0, right=1)

node_x4_4 = Builder.DecisionNode(4, left=0, right=1)

node_x4_5 = Builder.DecisionNode(4, left=0, right=1)

node_x3_1 = Builder.DecisionNode(3, left=0, right=node_x4_1)

node_x3_2 = Builder.DecisionNode(3, left=node_x4_2, right=node_x4_3)

node_x3_3 = Builder.DecisionNode(3, left=node_x4_4, right=node_x4_5)

node_x2_1 = Builder.DecisionNode(2, left=0, right=node_x3_1)

node_x2_2 = Builder.DecisionNode(2, left=node_x3_2, right=node_x3_3)

node_x1_1 = Builder.DecisionNode(1, left=node_x2_1, right=node_x2_2)

tree = Builder.DecisionTree(4, node_x1_1, force_features_equal_to_binaries=True)

We compute the contrastive reasons for these two instances:

explainer = Explainer.initialize(tree)

explainer.set_instance((1,1,1,1))

contrastives = explainer.contrastive_reason(n=Explainer.ALL)

print("Contrastives:", contrastives)

for contrastive in contrastives:

assert explainer.is_contrastive_reason(contrastive), "This is have to be a contrastive reason !"

print("-------------------------------")

explainer.set_instance((0,0,0,0))

contrastives = explainer.contrastive_reason(n=Explainer.ALL)

print("Contrastives:", contrastives)

for contrastive in contrastives:

assert explainer.is_contrastive_reason(contrastive), "This is have to be a contrastive reason !"

Contrastives: ((4,), (1, 2), (1, 3))

-------------------------------

Contrastives: ((-1, -4), (-2, -3, -4))

Example from a Real Dataset

For this example, we take the compas dataset. We create a model using the hold-out approach (by default, the test size is set to 30%) and select a well-classified instance.

from pyxai import Learning, Explainer

learner = Learning.Scikitlearn("../../../dataset/compas.csv", learner_type=Learning.CLASSIFICATION)

model = learner.evaluate(method=Learning.HOLD_OUT, output=Learning.DT)

instance, prediction = learner.get_instances(model, n=1, correct=True)

data:

Number_of_Priors score_factor Age_Above_FourtyFive

0 0 0 1 \

1 0 0 0

2 4 0 0

3 0 0 0

4 14 1 0

... ... ... ...

6167 0 1 0

6168 0 0 0

6169 0 0 1

6170 3 0 0

6171 2 0 0

Age_Below_TwentyFive African_American Asian Hispanic

0 0 0 0 0 \

1 0 1 0 0

2 1 1 0 0

3 0 0 0 0

4 0 0 0 0

... ... ... ... ...

6167 1 1 0 0

6168 1 1 0 0

6169 0 0 0 0

6170 0 1 0 0

6171 1 0 0 1

Native_American Other Female Misdemeanor Two_yr_Recidivism

0 0 1 0 0 0

1 0 0 0 0 1

2 0 0 0 0 1

3 0 1 0 1 0

4 0 0 0 0 1

... ... ... ... ... ...

6167 0 0 0 0 0

6168 0 0 0 0 0

6169 0 1 0 0 0

6170 0 0 1 1 0

6171 0 0 1 0 1

[6172 rows x 12 columns]

-------------- Information ---------------

Dataset name: ../../../dataset/compas.csv

nFeatures (nAttributes, with the labels): 12

nInstances (nObservations): 6172

nLabels: 2

--------------- Evaluation ---------------

method: HoldOut

output: DT

learner_type: Classification

learner_options: {'max_depth': None, 'random_state': 0}

--------- Evaluation Information ---------

For the evaluation number 0:

metrics:

accuracy: 65.33477321814254

nTraining instances: 4320

nTest instances: 1852

--------------- Explainer ----------------

For the evaluation number 0:

**Decision Tree Model**

nFeatures: 11

nNodes: 539

nVariables: 46

--------------- Instances ----------------

number of instances selected: 1

----------------------------------------------

We compute all the contrastives reasons for this instance:

explainer = Explainer.initialize(model, instance)

print("instance:", instance)

print("prediction:", prediction)

print()

constractive_reasons = explainer.contrastive_reason(n=Explainer.ALL)

print("number of constractive reasons:", len(constractive_reasons))

all_are_contrastive = True

for contrastive in constractive_reasons:

if not explainer.is_contrastive_reason(contrastive):

print(f"{contrastive} is not a contrastive reason.")

all_are_contrastive = False

if all_are_contrastive: print("all reasons are indeed contrastives.")

instance: [0 0 1 0 0 0 0 0 1 0 0]

prediction: 0

number of constractive reasons: 19

all reasons are indeed contrastives.

Other types of explanations are presented in the Explanations Computation page.